Driving Without a Map

Imagine using a GPS that only looks 100 feet ahead. At every intersection, it picks the turn that seems best right now — no knowledge of the full route, no awareness of dead ends ahead, no ability to backtrack.

Most of the time, you get somewhere reasonable. But sometimes you end up stuck in a cul-de-sac, confidently told you have "arrived" when you are nowhere near your destination.

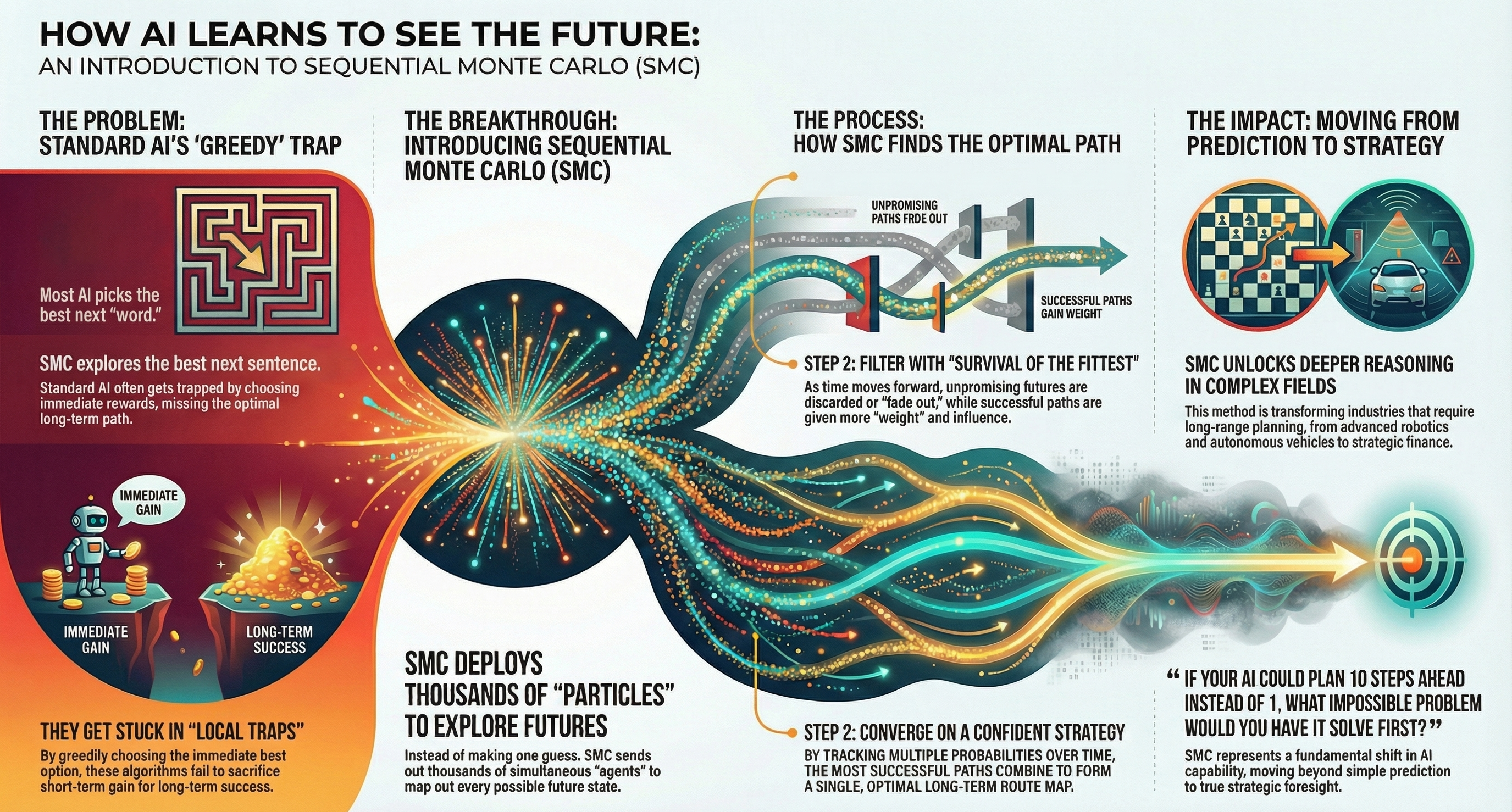

This is roughly how standard autoregressive language models work. They predict the next token based on everything before it, choosing the locally best option at each step. This greedy, one-turn-at-a-time approach is remarkably effective for fluent text generation. It seems far less effective for complex reasoning, where the best next step depends on where you need to end up.

The Greedy Trap

Standard AI often appears to get trapped by its own local optimization. It commits to an approach early — a proof strategy, a code architecture, a line of argument — because it looked best at the first step.

By the time the limitations of that approach become apparent, the model has no mechanism to backtrack and try a different path. It doubles down on the wrong direction because going forward is all it knows how to do.

This seems to be a fundamental limitation of greedy decoding. It explores one path through the reasoning space and commits to it. For simple problems, one path is often sufficient. For complex, multi-step reasoning — the kind that matters for real-world applications — a single path is rarely optimal.

Sequential Monte Carlo: Survival of the Fittest

Sequential Monte Carlo (SMC) introduces a fundamentally different approach. Instead of committing to one reasoning path, it explores many simultaneously. At each step, multiple candidate paths are evaluated, and the weakest are pruned while the strongest are extended.

Think of it as evolution applied to reasoning — survival of the fittest tokens.

The process works in three phases:

Generation — Multiple candidate continuations are sampled at each step, creating a branching tree of possible reasoning paths.

Evaluation — Each branch is scored against the target — does this path look like it's heading toward a correct, complete, well-structured answer?

Selection — Low-scoring branches are pruned and high-scoring branches are duplicated and extended, concentrating computational resources on the most promising directions.

The result isn't just better final answers. It seems to be qualitatively different reasoning. The model can explore a proof strategy, recognize it's failing, backtrack, and try a different approach — something greedy decoding fundamentally cannot do.

From Prediction to Strategy

The shift from greedy decoding to SMC parallels what might be a broader shift in how AI reasons: from prediction (what comes next?) to strategy (what path reaches the goal?).

This could matter for AI effectiveness because many of the most valuable real-world tasks seem to be strategic, not predictive:

Diagnosing a complex system failure

Planning a multi-quarter business strategy

Writing code that must satisfy interacting constraints

These tasks require the ability to look ahead, evaluate trade-offs, and sometimes abandon a promising-looking path when a better one emerges.

SMC probably doesn't make the model smarter in itself. What it seems to do is make the model's reasoning process smarter — giving it the ability to explore, evaluate, and choose rather than simply predict.

The AI no longer drives without a map. It doesn't have a fixed map either. Instead, it builds the map as it goes, exploring multiple routes simultaneously and converging on the one most likely to reach the destination.

Related reading: Is AI Smarter or Just Luckier? — the grokking phenomenon and what it reveals about when AI reasoning is genuine versus pattern matching.