The Wrong Audience

There is a quiet assumption embedded in how most people interact with AI: we write prompts the way we write emails — concise, polished, optimized for human readability. We trim the context. We summarize the background. We get to the point quickly.

The problem is that the audience probably isn't human — at least not in the way that matters.

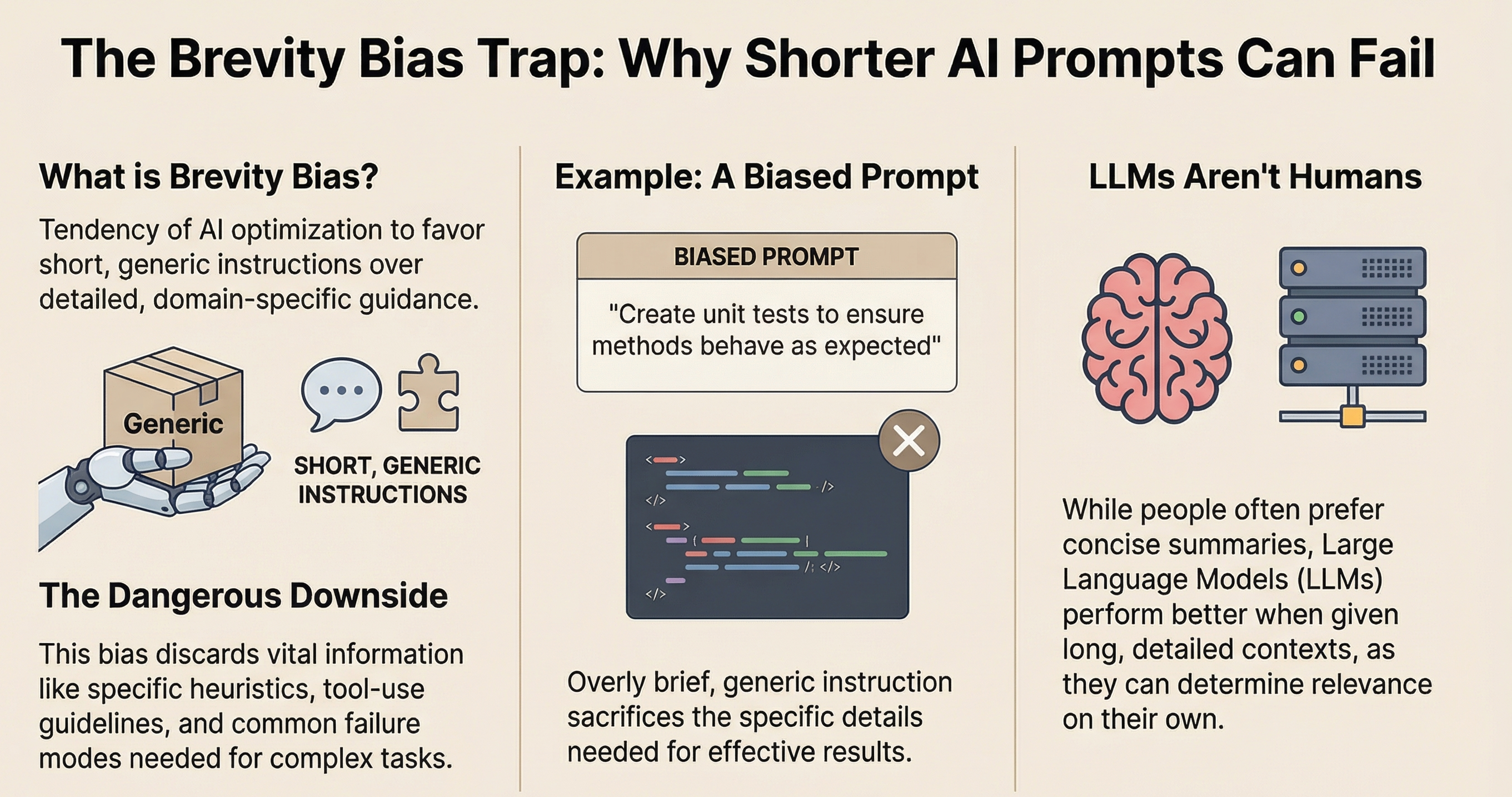

Large language models don't seem to process context the way people do. They don't "understand" brevity as efficiency. They appear to experience it as missing information. And when information is missing, they do what any system does when operating with incomplete data — they guess.

We call those guesses hallucinations. But often, the more useful diagnosis might be simpler: we starved the model of context because we were writing for the wrong reader.

Brevity Bias

Brevity bias is the tendency to compress prompts to their shortest useful form. It comes from good instincts — respect for the reader's time, clarity of communication, professional norms around conciseness. But these instincts are calibrated for human cognition, where the reader fills in gaps from shared experience, cultural context, and common sense.

An LLM has none of that. Every piece of context you omit because "it's obvious" is a piece of context the model genuinely does not have. The result isn't just less accurate outputs — it's outputs that feel confident while being subtly wrong, because the model has no way to signal "I'm guessing here because you didn't give me enough to work with."

What Brevity Costs

The cost seems to show up in specific, measurable ways:

Responses that address the letter of the prompt but miss the spirit

Code that compiles but misses edge cases that were "obvious" from context never provided

Analysis that uses the right framework but applies it to the wrong aspect of the problem

These probably aren't failures of the model so much as failures of context engineering — the discipline of constructing prompts that give the model the information it actually needs, in the format it can actually use, regardless of whether a human reader would find that format verbose or redundant.

From Prompt Craft to Context Engineering

The shift might be from thinking about prompts as communication (human to machine) to thinking about them as context construction — engineering the information environment in which the model reasons.

This could mean:

Including background that seems obvious

Providing examples even when the task seems clear

Specifying constraints that a human colleague would infer

Being explicit about what "good" looks like

It feels unnatural — even wasteful — to anyone trained in concise professional communication. But the model isn't your colleague. It's a reasoning engine that operates on exactly the context you provide, no more and no less.

The question worth asking might not be "how short can I make this prompt?" but "what does this model need to reason well about this problem?"

Next in this series: Your AI Has Amnesia — what happens when even generous context hits the wall of context collapse.